A Manager's Rubric for AI Quality Control

A technical framework could be the secret to helping managers combat workslop.

Sydney Scott

Editorial Strategist, AI

Workday

A technical framework could be the secret to helping managers combat workslop.

Sydney Scott

Editorial Strategist, AI

Workday

Artificial intelligence is now a key part of any enterprise structure, with generative AI, specifically, woven into every corner of work. Research shows 85% of employees save up to seven hours a week using these tools. But there’s a catch: roughly 40% of those time savings are lost because workers must fix low-quality or incorrect outputs—or as Dr. Kate Niederhoffer, chief scientist at BetterUp Labs coined it, workslop.

This creates a hidden drag on talent. To bridge the gap between speed and quality, there’s a technical framework for evaluating AI that might do the trick—SEAL. Short for Scoring, Evaluation, and Analysis of Large Language Models, it’s a technical rubric that organizations can adapt to help non-technical managers review AI.

While keeping a human in the loop sounds simple, it often turns into guesswork in practice. Without a clear standard, one manager’s "good enough" is another's workslop. Originally developed by Workday engineers to ensure enterprise-grade reliability, SEAL provides a structured way for teams to remain consistent and catch risks early.

By adopting this framework to combat AI slop, organizations can move past trial-and-error and finally reclaim the hours lost to fixing low-quality outputs.

Report

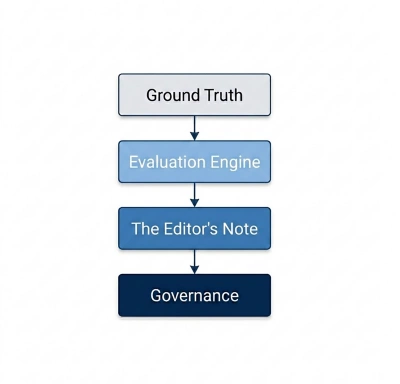

SEAL brings structure to the black box of AI. In a technical setting, SEAL tests how an AI model performs against specific data. It moves AI review away from vibes—judging work on how it sounds—and toward metric-based facts.The framework uses three main parts:

By adopting and adapting the SEAL framework, managers become strategic auditors combating slop and protecting the company from costly hallucinations.

Non-technical managers do not need to review code to stop workslop. Instead, they can adapt the SEAL framework into a repeatable audit. By following this rubric, leaders move from blind trust to a "trust but verify" workflow. This ensures that AI-assisted output—from strategy decks to payroll reports—meets the same rigorous bars for truth as human-only work.

Here are four key steps to help teams adapt the SEAL framework:

Start by aligning standards and messaging. Pick five to 15 of the best examples of past company work. Use these as a reference to ensure employees base AI prompts on internal facts rather than generic internet noise. This embeds sharpness and institutional knowledge into every output.

Data experts aren’t the only employees who can take advantage of SEAL measurements. Teams can translate the framework into business alignment KPIs by checking AI output against criteria such as:

The most effective part of the rubric is the Detailed Score Explanation. Instead of a vague percentage, look for the narrative note. A SEAL-based audit might flag that "the summary missed three key risks found in the source text." This allows the manager to act as an editor, focusing only on the specific gaps identified.

AI models can degrade over time. Use a simple dashboard to track if the time spent fixing drafts is going up or down. If quality scores drop below a set baseline, it’s time to refresh the Gold Standard library or update the prompts.

By adopting and adapting the SEAL framework, managers step into the role of strategic auditors—protecting the organization from the risks of slop and costly hallucinations. Ultimately, a standardized quality rubric empowers leaders to spend less time policing AI and more time on the sophisticated analysis that drives a business forward.

Report