Why ‘Good Enough’ Data Is a CFO’s Biggest AI Risk

In the AI age, inadequate data integrity can pose a threat to the balance sheet. Learn how to avoid the risk.

Blaise Radley

Editorial Strategist

Workday

In the AI age, inadequate data integrity can pose a threat to the balance sheet. Learn how to avoid the risk.

Blaise Radley

Editorial Strategist

Workday

The allure of AI in finance is undeniable: the promise of autonomous forecasting, real-time risk mitigation, and a level of operational efficiency that was science fiction a decade ago. But as the initial hype settles into implementation, a sobering reality is emerging for the C-suite: An AI system is only as credible as the data behind it.

“There is an increasing amount of pressure on business leaders to use data in today’s hyper-competitive environment,” Southard Jones, chief product officer at Tableau, said in a Salesforce study. “Yet, fragmented enterprise data and complex analytics tools are common obstacles leaders face that can significantly hinder confident decision-making.”

For a CFO, the stakes of “good-enough” data have shifted to the point where it poses a fundamental threat to the integrity of the balance sheet.

Report

In the pre-AI era, largely accurate data was manageable. If a spreadsheet had a few inconsistencies or a legacy database contained some noise, a skilled analyst could spot the outlier and correct it before a quarterly review. Human intuition acted as the final filter.

AI removes that filter. Machine learning models don't know when a data point is an entry error versus a market trend and might simply incorporate it into their logic. Feeding mediocre data into sophisticated algorithms can result in scaling inaccuracies at a speed no manual process can catch.

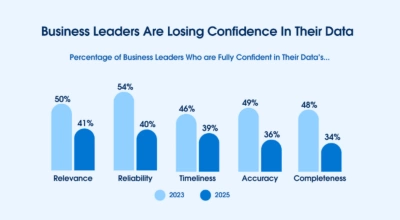

The Salesforce survey of 552 business leaders found a multi-year decline in confidence for various aspects of their data. The trend turns potentially troubling where the finance function is concerned.

While often framed in technical terms, data quality is now increasingly a matter of risk management for modern CFOs. Consider the following scenarios where less-than-perfect data creates genuine liability:

Automated compliance failures: If AI-driven audit tools are trained on incomplete historical data, they may overlook patterns of non-compliance, leaving the firm vulnerable to regulatory fines.

Skewed capital allocation: AI models used for predictive budgeting can lead to disastrous hallucinations if they aren’t grounded in high-fidelity data, resulting in millions of dollars diverted to the wrong initiatives.

Erosion of investor trust: In an era where financial transparency is scrutinized by algorithms and humans alike, a single AI-generated report based on noisy data can trigger a crisis of confidence.

The reality check: A model that is 90% accurate sounds impressive—until it’s clear that the 10% margin of error potentially represents millions in misallocated capital or a missed regulatory requirement.

While often framed in technical terms, data quality is now increasingly a matter of risk management for modern CFOs.

Investment in AI is often viewed as an innovation spend. But to protect the organization, the CFO might need to view data cleansing and governance as an essential infrastructure spend, no different from maintaining a physical plant or a secure cloud network.

Research by Drexel University and Precisely found that just 12% of organizations report the quality of their data as sufficient for AI.

Persuading the board to slow down the AI sprint to fix the data marathon is a difficult conversation but very likely a necessary one. Transitioning from average-quality data to AI-ready data helps ensure the tools tasked with helping steer the company are actually looking at the right map.

Before signing off on the next major AI capital expenditure, finance leaders might want to consider some traits of a healthy data ecosystem. If these indicators are flashing red, the risk of “Garbage In, Liability Out” increases exponentially.

Can your team trace a data point from a front-end report back to its original source? In an AI world, data without visibility is a liability.

The goal: Full visibility into how data is collected, transformed, and cleaned. If finance teams can’t audit the input, they can’t defend the output.

Financial data loses value and accuracy over time. AI models trained on stale 2023 market conditions will struggle to navigate the volatility of 2026.

The goal: Automated pipelines that prioritize real-time or near-real-time ingestion, ensuring models aren’t making decisions based on yesterday’s news.

How often do different departments present conflicting numbers for the same metric (e.g., gross margin or customer acquisition cost)?

The goal: A unified data layer. If your AI pulls from three different versions of a revenue spreadsheet, the resulting hallucination is a mathematical certainty.

Much of a firm’s institutional knowledge is trapped in unstructured formats, such as PDFs, scanned invoices, and buried email threads.

The goal: High percentages of structured, labeled data. If your data requires a human to interpret it before it makes sense, an AI will likely misinterpret it.

Does your organization have a formal process for flagging and correcting data errors when they are spotted?

The goal: A closed-loop system where data errors found during manual audits are fed back into the system to retrain the AI, preventing the same mistake from happening twice.

Before signing off on the next major AI capital expenditure, finance leaders might want to consider some traits of a healthy data ecosystem.

By focusing on these indicators, the CFO shifts the conversation from, “How fast can we deploy AI?” to, “How safely can we scale our insights?”

Investing in these health markers is a form of capital preservation. It ensures that when the AI finally delivers its autonomous forecast, finance teams have the data-backed confidence to act on it. While the initial investment in data integrity may seem like a back-office expense, the long-term dividends include a clear, auditable trail for regulators that proves AI decisions are grounded in verified facts, as well as a sustainable competitive advantage: While competitors are busy troubleshooting poor outputs, prepared organizations will be making confident, high-velocity moves.

In an AI-driven economy, trust is the ultimate currency. For a CFO, protecting that asset becomes critical—with shareholders, with regulators, and finance teams.

Report